Table of Contents

Overview

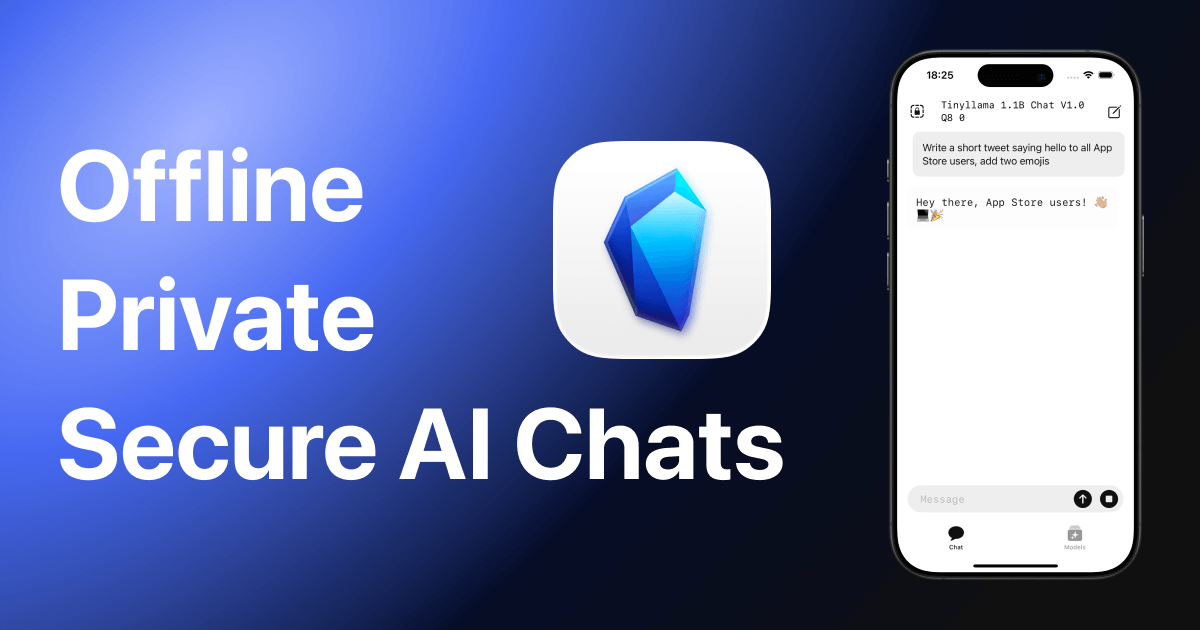

In an era where AI assistants remain almost exclusively cloud-based with persistent privacy concerns, the critical question facing users becomes unavoidable: Can you leverage the incredible power of large language models (LLMs) while guaranteeing your conversations never leave your personal device? Lapis enters the privacy-conscious AI landscape with compelling answer through innovative approach emphasizing local-first computation. Launched in November 2025 and available on macOS (M1+), iOS, iPadOS, and Apple Vision, Lapis brings unique positioning to AI chat experience by running powerful open-source LLMs entirely locally on your device, offering fully offline and completely private alternative to mainstream cloud-dependent tools. With Lapis, your data remains exclusively yours—never transmitted to external servers, never collected by third parties, and never exposed to organizational data harvesting. The platform enables anyone to build personal private AI ecosystem independent of cloud infrastructure or commercial AI services, addressing growing concerns about AI data privacy and surveillance capitalism inherent in traditional cloud-based chatbots.

Key Features

Lapis delivers comprehensive local-first LLM execution through unified interface emphasizing privacy, model flexibility, and complete user control.

Run Any Hugging Face LLM Offline with Flexible Model Selection: Simply find any model you prefer on Hugging Face (Gemma, Phi, LLaMA, Qwen, DeepSeek, or GPT-OSS) and paste its repository URL directly into Lapis. Platform automatically handles model downloading, storage optimization, format verification, and compatibility checking without requiring technical expertise. Access to massive ecosystem of 50,000+ open-source models right on your desktop enabling unlimited model experimentation. Instantaneous model switching enabling comparison and iteration testing without server switching.

No Cloud or Server Data Transmission Guaranteeing Complete Privacy: All conversations and data processed exclusively on your local device through zero-contact approach with external servers. Cryptographic guarantees that your information remains confidential, never leaving your device, never captured by intermediaries, never analyzed for marketing insights. True end-to-end privacy contrasting sharply with cloud-based tools logging conversations for model training, business analytics, or organizational surveillance.

Model Library Management with Centralized Organization: Clean, intuitive interface enables downloading, organizing, comparing, and managing unlimited local LLMs. Dedicated library view displaying installed models, model sizes, capabilities, and performance characteristics. Quick-swap functionality enabling instant model switching within conversations preserving context while testing different models. Storage management tools enabling selective model deletion optimizing device storage without reinstalling.

Total Data Privacy with Zero Telemetry or Analytics: Complete privacy by design philosophy ensuring no telemetry, analytics tracking, crash reporting, or usage monitoring. Chat histories stored exclusively on device with optional encryption. No behavioral tracking, no advertising profiles, no third-party data sales. Device-level security controls maintained exclusively by user without corporate data harvesting infrastructure.

Optimized Performance Across Apple Ecosystem: Native integration with macOS, iOS, iPadOS, and Apple Vision enabling seamless experience across all Apple devices with automatic synchronization. Metal framework optimization leveraging Apple Silicon for efficient GPU acceleration enabling smooth performance even on mid-range devices. Background model loading enabling uninterrupted chat while new models download.

Cost-Free Forever Commitment: Completely free application with explicit developer commitment maintaining zero-cost access indefinitely. No hidden paywalls, no subscription traps, no feature gates masking revenue models. One-time download provides lifetime unlimited access to all features.

Open Architecture Supporting Model Format Compatibility: Support for transformer-based architectures including LLaMA 2, Phi-2, Gemma, Qwen, and all Hugging Face compatible formats. Forward compatibility ensuring future models automatically supported without application updates.

Siri and Shortcuts Integration for System-Level Automation: Native integration with Apple’s Siri voice assistant and Shortcuts automation framework enabling voice-based model invocation, scripting automation, and workflow integration impossible with isolated applications.

Pricing

Lapis operates on completely free pricing model with explicit zero-cost commitment.

Completely Free Lifetime Access: Single one-time download providing permanent access to all features with no recurring charges, subscription tiers, or feature paywalls. Developers explicitly committed to maintaining free access indefinitely regardless of platform evolution or feature expansion.

No Hidden Costs or Subscriptions: Zero hidden fees, no premium tiers, no freemium traps. Free marketplace access to 50,000+ open-source models eliminating licensing fees or model rental costs characteristic of commercial AI platforms.

Available on App Store: Free download directly from Apple App Store for macOS (macOS 15.5 or later, M1+ required), iOS (iOS 18.5 or later), iPadOS (iPadOS 18.5 or later), and Apple Vision Pro (visionOS 2.5 or later).

Note: Lapis pricing model emphasizes completely free access. No organizational licensing, volume discounts, or enterprise pricing exists since platform designed for individual users and teams valuing absolute privacy.

How It Works

Lapis’s operational workflow emphasizes simplicity and user autonomy through straightforward model management and conversational interface.

Model Selection and Installation Phase: Users browse Hugging Face discovering models matching their requirements then paste model repository URL into Lapis application. Alternatively, users can load previously downloaded model files from local storage. Lapis automatically downloads and validates model compatibility, formats data appropriately for local execution, and prepares infrastructure.

Local Execution Environment Setup: Platform configures local execution environment optimizing for device hardware (CPU/GPU resource allocation) ensuring efficient performance balancing speed and memory consumption. Metal framework leverages Apple Silicon capabilities enabling GPU acceleration. System automatically detects available hardware and selects optimal execution strategy.

Chat and Interaction Phase: Users interact with loaded models through natural conversational interface similar to ChatGPT-style chat. All processing occurs locally with conversational context maintained entirely on device. Model switching available mid-conversation enabling instant A/B comparison between different models with identical prompts.

Model Management and Library Organization: Users organize downloaded models through centralized library interface. Models displayed with metadata (size, architecture, parameter count, capabilities). Storage management enables selective model deletion optimizing space without data loss risk.

Export and Integration Phase: Completed conversations can be exported as text, integrated into notes through Shortcuts automation, or archived locally for reference. Integration with Apple ecosystem enables seamless workflow inclusion.

Typical workflow from model selection through productive chat typically completes within minutes with only device resource constraints limiting model sophistication or response speed.

Use Cases

Lapis serves diverse scenarios where privacy, control, and offline capability drive value proposition.

Private Chat and Creative Ideation: Use Lapis as secure brainstorming partner, creative writing assistant, research ideation tool, or personal tutor without concern about ideas being logged, monitored, or used for model training by commercial AI providers. Particularly valuable for creative professionals, journalists, lawyers, and others with confidential ideation requiring absolute privacy assurance.

Developer LLM Testing and Experimentation: Developers rapidly download, test, compare, and benchmark performance of diverse open-source LLMs in controlled local environment without API costs, rate limiting, or commercial vendor restrictions. Perfect for evaluating model quality before production deployment decisions or researching emerging open-source alternatives.

Secure Data Annotation and Experimental Workflows: Researchers and professionals working with confidential, proprietary, or sensitive data use Lapis providing secure sandbox for annotating text, conducting experimental workflows, or analyzing sensitive information where cloud-based exposure risks regulatory violations, compliance failures, or intellectual property loss.

Personal Privacy Advocacy: Privacy-conscious individuals, security professionals, and privacy advocates use Lapis demonstrating commitment to data sovereignty and refusal to participate in cloud-based surveillance capitalism characterizing mainstream AI tools.

Healthcare and Legal Professionals: Doctors, lawyers, and healthcare professionals handle protected health information (PHI) and attorney-client privileged communications requiring legal and regulatory privacy compliance. Lapis provides HIPAA-compliant, privileged-communication-safe analysis without external server exposure or documentation retention.

Offline Education and Isolated Environments: Teachers, students, and educational professionals in air-gapped networks, rural areas with limited connectivity, or international settings with bandwidth constraints use Lapis enabling AI-powered education without relying on unstable external connectivity.

Research in Regulated and Restricted Environments: Scientists and researchers in regulated industries (pharmaceuticals, defense, government research) unable to use commercial cloud AI tools due to compliance, classification, or data restrictions use Lapis for compliant research support.

Personal Finance and Sensitive Analysis: Individuals analyzing personal financial data, investment portfolios, or sensitive health information use Lapis ensuring financial information and sensitive health data never transmitted to external servers.

Pros and Cons

Understanding both advantages and limitations provides clarity for evaluating Lapis’s fit for offline AI and privacy-focused needs.

Advantages

Fully Private with Complete Data Sovereignty: All processing occurs exclusively locally on user’s device offering unparalleled security and complete data confidentiality. No third parties, no telemetry, no analytics, no data harvesting—genuine zero-knowledge architecture differentiating from cloud tools regardless of privacy claims.

Open-Source Flexibility Without Vendor Lock-in: Not locked into single commercial model provider. Complete freedom to use any compatible open-source model enabling organization-specific model optimization, cost minimization, or performance tailoring independent of vendor decisions.

Total Model Control and Autonomy: Users manage their own models with zero reliance on third-party services, subscriptions, or API keys. Complete freedom to experiment, test, and deploy arbitrary models reflecting user’s technology priorities rather than vendor’s commercial interests.

Offline-First Architecture with No Connectivity Dependency: Functions completely offline enabling use in disconnected environments (airplanes, remote locations, air-gapped networks). Network connectivity never required distinguishing from cloud tools requiring constant connectivity or automatically falling back to degraded modes.

Cost-Free Forever Commitment: Genuinely free with explicit developer commitment to permanent zero-cost access. No hidden subscriptions, no freemium traps, no eventual monetization schemes converting free tier users into paying customers.

Disadvantages

Resource-Intensive Computation with Hardware Limitations: Running large language models requires significant RAM and processing power. Performance directly tied to device hardware—smaller models run fast on modest devices while sophisticated models require substantial computational resources creating performance ceiling based on device specifications.

Limited by Device Hardware Capabilities: Unlike cloud-based tools accessing massive server farms with unlimited computational resources, Lapis constrained by local device capabilities. Model sophistication, response speed, and parallel processing capacity fundamentally limited by device hardware.

Learning Curve for Non-Technical Users: While simpler than command-line tools, model selection and optimization requires basic technical understanding of model architectures, parameter counts, quantization formats, and hardware compatibility. Non-technical users may struggle with model selection optimization.

Model Availability and Curation Responsibility: Users responsible for identifying, evaluating, and selecting from 50,000+ available models. Unlike curated cloud platforms pre-selecting models, Lapis delegates curation responsibility to users potentially resulting in model quality variation and selection paralysis.

Storage Constraints with Large Models: Large sophisticated models consume 10-30+ GB storage space. Device storage limitations constrain simultaneously available models and downloading numerous models creates significant storage burden on typical devices.

No Cloud Backup or Synchronization: Chat histories and downloaded models exist exclusively locally with no automatic backup infrastructure. Device loss, corruption, or failure results in permanent data loss without user-implemented backup procedures.

New Platform with Limited Extended History: Launched November 2025 means relatively limited production deployment history, undiscovered edge cases at scale, and limited community-developed best practices compared to mature tools.

How Does It Compare?

The local LLM application and offline AI landscape features diverse solutions ranging from specialized iOS applications to comprehensive framework-based approaches. Understanding Lapis’s positioning requires examining specific alternatives across different platforms and specialization approaches.

LM Studio

LM Studio provides desktop-focused application for Windows, macOS, and Linux emphasizing desktop-class LLM execution, powerful GPU utilization, and developer-friendly interfaces. Features include model download automation, chat interface, local REST API server enabling integration, and OpenAI API compatibility. Free for home and commercial use. Targets developers and users prioritizing desktop performance.

LM Studio and Lapis serve different platform ecosystems and user sophistication. LM Studio desktop-optimized for maximum performance utilizing powerful GPUs and unlimited RAM. Lapis Apple-ecosystem-focused optimized for Apple Silicon and mobile-first workflows. LM Studio powerful desktop compute; Lapis portable Apple integration. LM Studio targets power users; Lapis targets Apple ecosystem users. Non-overlapping target platforms.

Ollama

Ollama provides lightweight command-line tool for running LLMs locally with emphasis on simplicity and cross-platform compatibility (macOS, Windows, Linux). Features include easy model installation via simple commands, REST API server, minimal configuration complexity, and Docker integration. Free and open-source. Targets developers and users comfortable with command-line interfaces.

Ollama and Lapis emphasize different access paradigms. Ollama command-line interface for technical users comfortable with terminal. Lapis graphical interface for users preferring visual interaction. Ollama minimalist infrastructure; Lapis feature-rich application. Ollama cross-platform; Lapis Apple-specific. Complementary rather than directly competitive—Ollama for infrastructure; Lapis for user interface.

Private LLM

Private LLM provides iOS/macOS application specializing in Apple platform local LLM execution. Features include model library management, chat interface, Siri integration, and support for diverse open-source models (Llama, Gemma, Mistral). Direct paid model (\$4.99 typically). Emphasizes privacy and Apple ecosystem optimization.

Private LLM and Lapis both specialize in Apple ecosystem offline LLM execution addressing similar user needs. Both offer privacy-first approaches, model flexibility, and offline functionality. Primary differentiation: Lapis free lifetime access while Private LLM requires purchase. Lapis newer platform; Private LLM established with mature user base. Both viable privacy-focused solutions with pricing being primary differentiator.

PrivateGPT

PrivateGPT provides developer-focused framework enabling building private AI applications. Emphasizes full customization, advanced integrations, and enterprise deployment. Technical implementation requiring development expertise. Free and open-source but requires technical setup.

PrivateGPT and Lapis target different user sophistication levels. PrivateGPT framework for developers and technical teams building custom private AI infrastructure. Lapis user-friendly application for end-users valuing simplicity. PrivateGPT maximum flexibility with high technical barrier; Lapis accessibility with feature limitations. Non-overlapping target audiences.

AnythingLLM

AnythingLLM provides privacy-focused application emphasizing file chat (ask questions about documents). Features include PDF/document support, local-first approach, and user-friendly interface. Free with optional paid tiers. Targets non-technical users seeking private AI document analysis.

AnythingLLM and Lapis both emphasize user accessibility and privacy but with different focus. AnythingLLM specifically optimized for document-based workflows. Lapis general-purpose chat focused. AnythingLLM document-centric; Lapis chat-centric. Complementary tools serving different primary use cases.

Key Differentiators

Lapis’s unique positioning centers on several distinctive capabilities. Apple-specific optimization providing Metal GPU acceleration and seamless ecosystem integration differentiates from cross-platform tools sacrificing platform-specific optimization. Free lifetime access commitment differentiates from freemium competitors introducing subscription pressure or monetization barriers.

Clean, user-friendly interface for non-technical users enables immediate adoption versus command-line tools or complex frameworks. Model marketplace access through simple URL paste differentiates from competitors requiring manual model downloads or technical configuration.

For developers prioritizing desktop performance and terminal-based workflows, LM Studio provides superior infrastructure. For command-line enthusiasts seeking minimalist approach, Ollama provides optimal simplicity. For document-focused workflows, AnythingLLM provides specialized capabilities. For enterprise-level custom applications, PrivateGPT provides maximum flexibility.

However, for Apple users prioritizing privacy, seeking user-friendly graphical interface, valuing zero-cost access commitment, and wanting immediate productivity without technical setup, Lapis presents compelling specialized solution uniquely positioned at intersection of Apple optimization, user accessibility, and privacy commitment.

Final Thoughts

Lapis represents powerful statement in privacy-conscious AI landscape: you don’t need to compromise data privacy or access surveillance capitalism platforms to leverage AI capabilities. By shifting computational burden to local devices and eliminating cloud dependency, platform removes traditional privacy-security tradeoff characterizing mainstream AI.

The November 2025 launch positions Lapis strategically within emerging privacy-first AI movement recognizing growing user dissatisfaction with data harvesting business models. While cloud-based platforms (ChatGPT, Claude, Gemini) dominate through massive marketing budgets and convenience, Lapis specializes precisely on audience valuing privacy and control above convenience.

Critical advantages include complete data privacy with zero telemetry, open-source flexibility enabling unlimited model experimentation, free lifetime access eliminating subscription traps, offline functionality enabling disconnected use, and Apple ecosystem optimization ensuring smooth performance on Apple devices.

Legitimate considerations include device hardware constraints limiting model sophistication, technical learning curve for non-technical model selection, storage requirements for multiple models, reliance on user-implemented backup procedures, and platform newness with limited extended production history.

For Apple users prioritizing absolute data privacy, those handling sensitive information requiring regulatory compliance, privacy advocates philosophically opposed to surveillance capitalism, developers testing open-source models, and researchers in restricted environments, Lapis delivers compelling value through accessible, privacy-first local AI enabling complete control and autonomy.

The completely free access enables risk-free comprehensive evaluation with actual workflows requiring only device storage and processing capacity. For individuals ready to adopt privacy-first AI principles, comfortable with local computation limitations, and valuing complete data sovereignty over cloud-based convenience, Lapis absolutely warrants serious evaluation as innovative solution specifically engineered for Apple users seeking genuine privacy-first AI unconstrained by corporate data harvesting infrastructure.

https://lapis.lvrpiz.com/