Deploy fast, private AI models across iOS, Android, and edge devices - with just a few lines of code.

www.runanywhere.ai

Overview

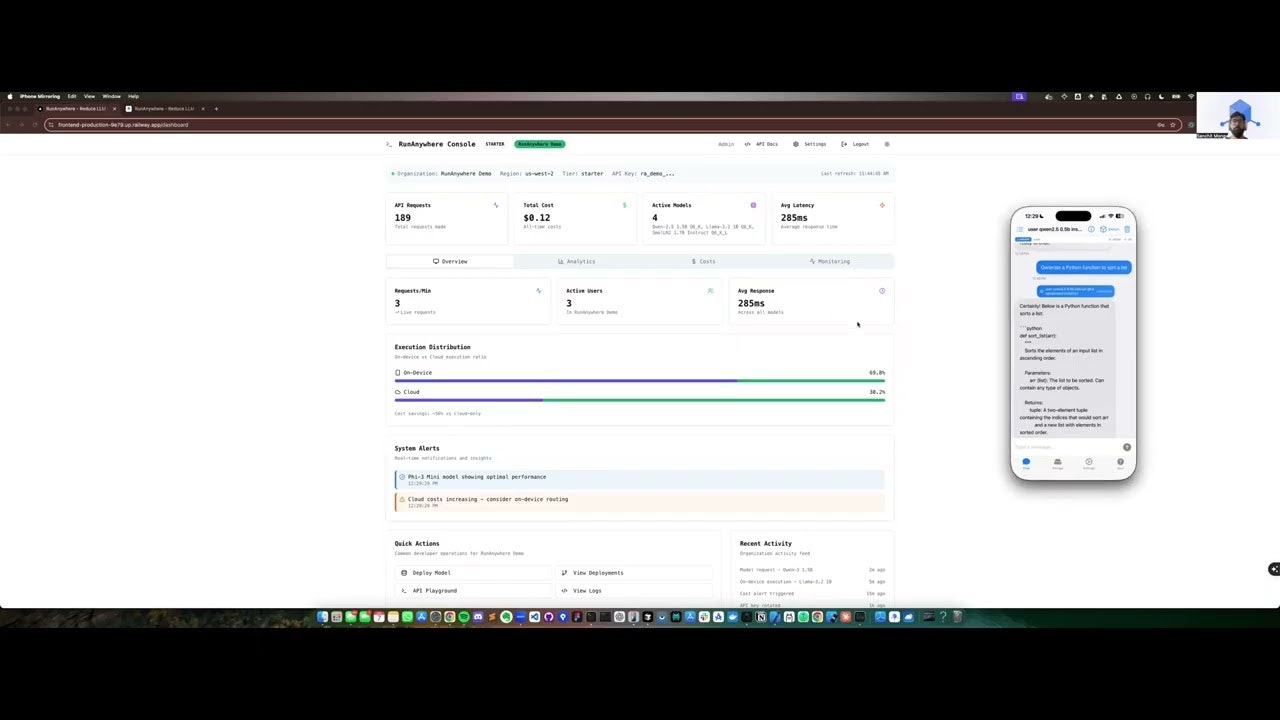

In an era where AI integration is paramount, the need for solutions that prioritize privacy, speed, and cost-efficiency is more critical than ever. RunAnywhere emerges as a groundbreaking hybrid AI platform designed to intelligently manage Large Language Model (LLM) requests across device and cloud environments. It meticulously routes requests for optimal performance, provides real-time cost monitoring, ensures low-latency responses, and champions data privacy through its intelligent local-first processing capabilities. It’s an ideal solution for developers and enterprises looking to harness AI power while optimizing for security, performance, and cost efficiency.Key Features

RunAnywhere is packed with functionalities designed to give you unparalleled control and performance over your AI deployments. Here’s a closer look at what makes it stand out:- Intelligent Routing of AI Requests: The platform smartly directs AI requests between on-device models and cloud services using policy-based routing, ensuring optimal performance, cost efficiency, and privacy based on request complexity and sensitivity.

- Real-time Cost Tracking and Optimization: Gain complete visibility into your AI expenditures with live cost monitoring at the token level, allowing for efficient usage and budget management with potential savings of 30-91% compared to cloud-only solutions.

- Near-Instant Latency with Privacy-Preserving Local Execution: Experience sub-200ms first-token responses as AI processing occurs locally on the device for simple requests, significantly enhancing user experience while keeping sensitive data private.

- Multi-Format LLM Support with Hybrid Architecture: RunAnywhere supports various model formats (GGUF, ONNX, CoreML, MLX) locally while providing intelligent cloud fallback for complex requests that exceed device capabilities, optimizing the balance between privacy and performance.

- Easy Integration for Mobile Applications: Designed for seamless adoption with 3-minute setup, it provides native iOS and Android SDKs with identical APIs, simplifying the process of embedding secure and rapid AI inference capabilities into mobile applications.

- Dynamic Policy Management: Configure routing policies in real-time without app updates, allowing developers to optimize for privacy, cost, and performance based on changing requirements and usage patterns.

How It Works

Understanding its features is one thing, but how does RunAnywhere actually bring these benefits to life? The process is remarkably straightforward yet sophisticated. To get started, you integrate the RunAnywhere SDK directly into your mobile application using the native iOS or Android runtime. Once integrated, the platform intelligently analyzes incoming AI requests using its policy engine, which evaluates factors like request complexity, privacy requirements, device capabilities, and cost considerations. Based on this analysis, it then routes these requests to the most appropriate processing location – either local on-device models for simple, privacy-sensitive tasks or cloud services for complex operations requiring larger models. Throughout this process, RunAnywhere diligently tracks expenses in real-time at the token level, ensuring cost efficiency. The system can seamlessly switch between processing locations without user intervention, maintaining optimal performance while preserving data privacy for sensitive requests through local processing.Use Cases

RunAnywhere’s unique hybrid capabilities make it suitable for a diverse range of mobile applications and industries. Here are some key scenarios where it truly shines:- Privacy-Sensitive Mobile Applications: Perfect for healthcare apps, financial services, and legal tech where sensitive data must be processed locally while maintaining access to powerful AI capabilities for complex analysis when needed.

- Enterprise Mobile Solutions with Cost Controls: Businesses can leverage RunAnywhere to deploy AI-powered mobile apps with precise control over costs, automatically routing expensive operations to cloud only when necessary while handling routine tasks on-device.

- Offline-Capable AI Applications: Ideal for travel apps, field service tools, and remote work applications that need to function seamlessly even without reliable internet connectivity, with graceful degradation and recovery.

- Scalable Consumer AI Features: Empowers consumer apps with intelligent features like content generation, language translation, or personal assistants that adapt processing location based on user tier, device capability, and feature complexity.

- Development and Testing Environments: Provides developers with comprehensive analytics and A/B testing capabilities to optimize AI model performance, cost efficiency, and user experience across different deployment scenarios.

Pros \& Cons

Every powerful tool comes with its own set of advantages and considerations. Let’s weigh the benefits and potential limitations of RunAnywhere.Advantages

- Optimized Cost-Performance Balance: Achieves 30-91% cost reduction compared to cloud-only solutions through intelligent hybrid processing, with documented savings of up to \$34,476 annually for high-volume applications (10M requests).

- Enhanced Privacy with Flexibility: Processes sensitive data locally while maintaining access to powerful cloud models for complex tasks, providing the best of both worlds for privacy-conscious applications.

- Superior Mobile Performance: Delivers sub-200ms first-token latency for common queries through on-device processing, significantly improving user experience compared to cloud-only solutions.

- Developer-Friendly Integration: Offers 3-minute setup with native iOS and Android SDKs, comprehensive analytics, and real-time policy management without requiring app updates.

- Proven Scalability: Supports unlimited app installs and provides infrastructure cost projections based on actual usage patterns, making it suitable for applications ranging from startups to enterprise scale.

Disadvantages

- Mobile Platform Limitation: Currently focused exclusively on iOS and Android platforms, which may limit adoption for web applications or desktop software requiring similar capabilities.

- Learning Curve for Optimization: While setup is quick, maximizing the platform’s benefits requires understanding of policy configuration and performance tuning to achieve optimal cost-performance balance.

- Hardware Dependency for Local Processing: On-device performance is limited by mobile hardware capabilities, requiring intelligent fallback strategies for resource-intensive operations.

- Beta Stage Limitations: As a relatively new platform, some advanced features and extensive documentation may still be under development, requiring direct support for complex implementations.

How Does It Compare?

When evaluating RunAnywhere against its competitors in the rapidly evolving 2025 mobile AI landscape, several key differentiators emerge across various categories of solutions.Versus Cloud-Only AI Services Compared to cloud-only platforms like OpenAI API, Anthropic Claude, or Google AI Services, RunAnywhere fundamentally shifts the paradigm by emphasizing hybrid processing with privacy-preserving local execution. Unlike cloud-based services where data must be transmitted to external servers for every request, RunAnywhere keeps sensitive data local for simple operations while intelligently routing complex requests to cloud services. This approach significantly reduces data transmission risks, enhances security compliance, and provides cost optimization through smart routing.

Versus Mobile AI Inference Frameworks The 2025 mobile AI framework landscape features several sophisticated competitors:

TensorFlow Lite (Google) remains the most mature mobile AI framework, offering extensive hardware optimization and broad model support. However, it requires significant technical expertise for optimization and lacks the intelligent routing and cost tracking capabilities that RunAnywhere provides out-of-the-box.

ONNX Runtime (Microsoft) excels in cross-platform compatibility and supports multiple model formats, making it valuable for organizations using diverse AI ecosystems. While powerful, it requires more manual configuration and doesn’t offer the automated hybrid cloud-device optimization of RunAnywhere.

PyTorch Mobile (Meta) provides excellent developer experience for PyTorch practitioners and maintains consistency with research workflows. However, it focuses purely on on-device inference without the intelligent cloud fallback and cost optimization features.

Apple MLX offers exceptional performance on Apple Silicon with unified memory architecture and Metal GPU acceleration. It’s limited to Apple’s ecosystem and requires more technical implementation compared to RunAnywhere’s simplified SDK approach.

Qualcomm AI Stack delivers industry-leading edge AI performance with custom NPU optimization and comprehensive developer tools through the AI Hub. However, it’s hardware-specific to Qualcomm platforms and requires more complex integration processes.

Versus Specialized Edge AI Platforms Edge Impulse provides comprehensive end-to-end machine learning for embedded devices with excellent tools for model training and deployment. It focuses more on IoT and embedded systems rather than mobile applications and lacks RunAnywhere’s cost-optimized hybrid approach.

MLC LLM (Machine Learning Compilation for LLMs) offers impressive on-device LLM capabilities with support for various model formats. However, it requires significant technical expertise and doesn’t provide the business-focused features like real-time cost tracking and policy management.

RunAnywhere’s Competitive Position RunAnywhere occupies a unique position by combining purpose-built mobile AI capabilities with intelligent business optimization features. While competitors excel in specific areas – TensorFlow Lite in maturity, MLX in Apple performance, or ONNX Runtime in flexibility – RunAnywhere provides the most comprehensive solution for businesses seeking to deploy AI with clear ROI visibility and automated optimization.

The platform’s strength lies in its business-focused approach, offering features like real-time cost analytics, policy-based routing, and seamless cloud fallback that address practical deployment challenges beyond pure technical performance. This positions it well for enterprise mobile applications where cost control, privacy compliance, and operational simplicity are as important as raw AI performance.

Market Evolution and Future Considerations The 2025 mobile AI landscape continues evolving rapidly, with increasing focus on hybrid approaches that balance privacy, performance, and cost. RunAnywhere’s early specialization in this hybrid model positions it advantageously as the market shifts toward more nuanced deployment strategies rather than purely on-device or cloud-only solutions.

However, success will depend on expanding beyond mobile platforms and potentially competing with established frameworks that may add similar hybrid capabilities. The platform’s current beta status and limited ecosystem require evaluation against more mature alternatives for production-critical applications.

Final Thoughts

RunAnywhere stands out as a compelling solution for mobile developers and enterprises looking to deploy AI with a strong emphasis on cost optimization, privacy protection, and performance efficiency. By intelligently routing AI processing between device and cloud environments, it addresses critical concerns around data security, operational costs, and user experience that pure cloud or device-only solutions cannot match effectively.The platform’s business-focused approach, featuring real-time cost tracking at the token level and policy-based optimization, makes advanced AI accessible and manageable for a broader range of mobile applications. With documented cost savings of 30-91% and the ability to process simple queries in under 200ms locally, RunAnywhere delivers tangible value propositions for cost-conscious developers and privacy-aware enterprises.

While it has limitations, particularly its current focus on mobile platforms and beta-stage maturity, its core strengths in intelligent hybrid processing and comprehensive business analytics make it an invaluable asset for the future of mobile AI deployment. The 3-minute setup process and native SDK integration lower the barrier to entry, making sophisticated AI optimization accessible to developers without requiring extensive machine learning expertise.

For organizations seeking to balance the power of modern AI with practical concerns around cost, privacy, and performance, RunAnywhere represents a thoughtful approach to the hybrid future of AI deployment. As the mobile AI landscape continues to mature, platforms that successfully optimize the device-cloud balance while providing clear business value will likely define the next generation of AI-powered mobile applications.

The platform’s success will ultimately depend on expanding its ecosystem, enhancing documentation, and potentially broadening beyond mobile platforms. However, for current mobile AI deployment needs requiring cost optimization and privacy protection, RunAnywhere offers a compelling alternative to traditional cloud-only or device-only approaches.

Deploy fast, private AI models across iOS, Android, and edge devices - with just a few lines of code.

www.runanywhere.ai