Overview

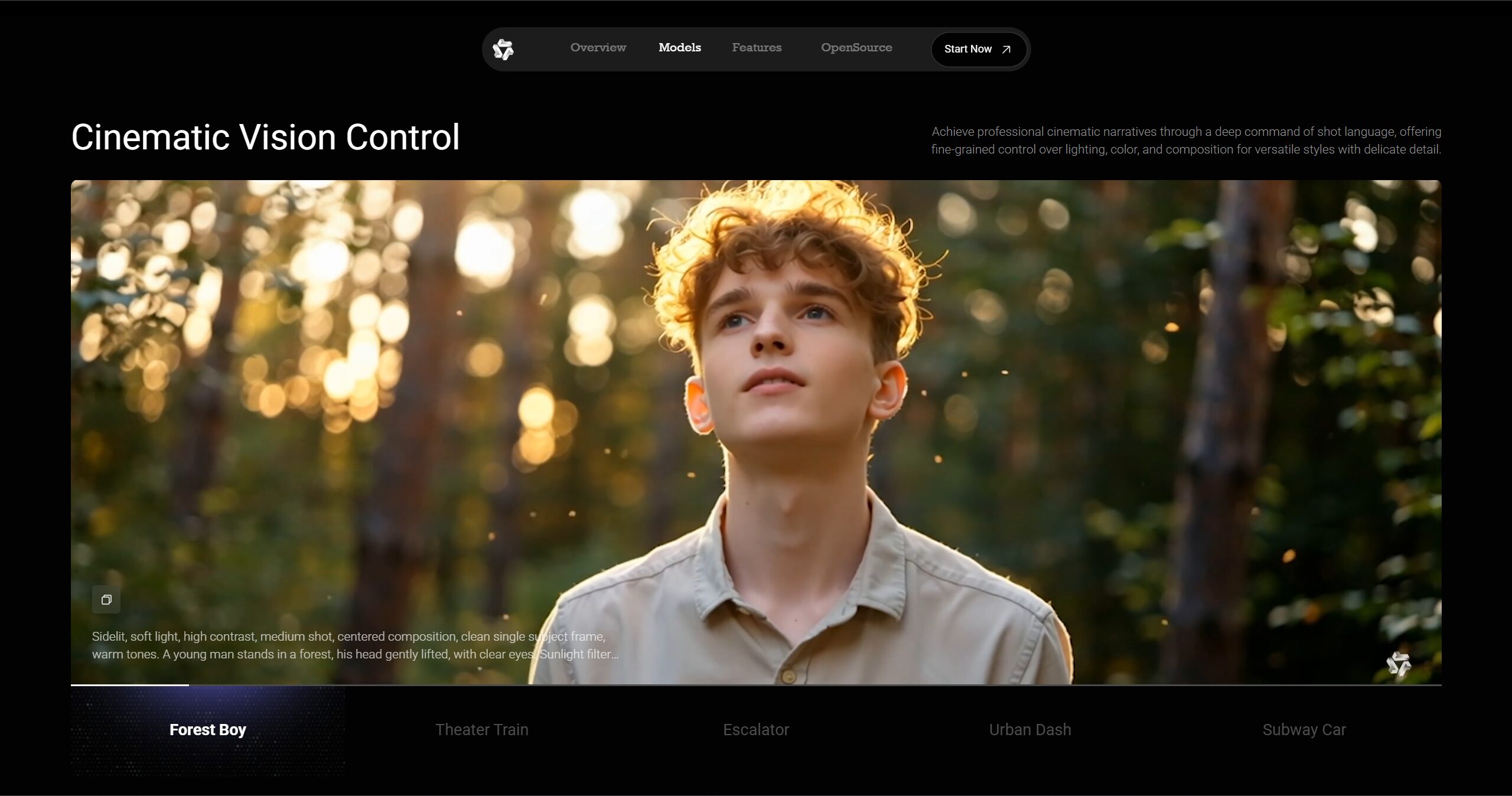

In the rapidly evolving landscape of AI-powered video generation, a significant open-source breakthrough has emerged: Wan 2.2. This major update to the Wan video models, developed by Alibaba’s Tongyi Lab, introduces a cutting-edge Mixture-of-Experts (MoE) architecture that propels its performance to top-tier levels among both open-source and commercial solutions. Beyond raw computational power, Wan 2.2 offers creators unprecedented, fine-grained cinematic control over crucial elements like lighting, color, and composition, making professional-grade video creation more accessible than ever before. With comprehensive support for text-to-video, image-to-video, and video editing capabilities, Wan 2.2 represents a paradigm shift in democratizing high-quality video generation technology.

The platform’s foundation builds upon extensive research from UC Berkeley and other leading institutions, incorporating advanced techniques like diffusion transformers and sophisticated video compression through the proprietary Wan2.2-VAE architecture. This combination enables the generation of cinematic-quality videos at up to 720P resolution with 24fps performance, all while maintaining efficiency on consumer-grade hardware.

Key Features

Wan 2.2 is packed with advanced capabilities designed to empower creators across all skill levels, from beginners experimenting with AI video generation to seasoned professionals requiring precise creative control:

- Leading benchmark performance with MoE architecture: Achieves state-of-the-art results on VBench and proprietary Wan-Bench 2.0 evaluations, utilizing a sophisticated two-expert design with 27B total parameters but only 14B active parameters per inference step, maintaining computational efficiency while maximizing model capacity.

- Comprehensive multilingual support: Seamlessly generates video content from prompts in both Chinese and English, with advanced visual text generation capabilities that can render dynamic text effects and typography directly within generated videos.

- Flexible model ecosystem: Offers three distinct model variants – the flagship 14B MoE models (T2V-A14B and I2V-A14B) for maximum quality, and the efficient 5B TI2V model for consumer hardware deployment, ensuring accessibility across different computational budgets.

- Universal video generation capabilities: Provides a comprehensive suite of functionalities including text-to-video generation, image-to-video transformation, video editing and extension, first-last frame interpolation, and hybrid text-image-to-video synthesis within unified frameworks.

- Consumer-grade hardware optimization: Remarkably optimized to run efficiently on standard consumer GPUs, with the TI2V-5B model requiring approximately 8-12GB of VRAM and capable of generating 5-second 720P videos in under 9 minutes on RTX 4090 hardware.

- Advanced compression and efficiency: Integrates the proprietary Wan2.2-VAE with 16×16×4 compression ratios, enhanced further with patchification layers achieving 4×32×32 total compression, enabling high-quality video reconstruction while maintaining processing efficiency.

How It Works

Wan 2.2 operates through a sophisticated multi-stage architecture that combines cutting-edge AI research with practical deployment considerations. The system begins with advanced text processing using bilingual encoders that parse user prompts in both English and Chinese, extracting semantic meaning, style preferences, and technical specifications. For image-to-video tasks, the system analyzes uploaded images to understand composition, subject matter, and potential motion trajectories.

The core generation process leverages the MoE architecture, where specialized expert models handle different phases of the diffusion process. A high-noise expert focuses on overall scene layout and composition during early denoising steps, while a low-noise expert refines details, textures, and fine motion in later stages. The transition between experts is dynamically determined by signal-to-noise ratio thresholds, optimizing both quality and computational efficiency.

Video synthesis occurs in compressed latent space through the Wan2.2-VAE, which encodes visual information at dramatic compression ratios while preserving temporal coherence and visual fidelity. The diffusion transformer processes sequences of spatiotemporal patches, generating coherent motion and maintaining object consistency across frames. Finally, the decoder reconstructs high-resolution video output with support for multiple aspect ratios and frame rates.

Users can access Wan 2.2 either through the dedicated web platform at wan.video for streamlined creation workflows, or via open-source implementations available on GitHub and Hugging Face for local deployment and customization. The system supports fine-tuning capabilities, allowing users to adapt models for specific visual styles or content domains.

Use Cases

Wan 2.2’s versatile capabilities and open-source accessibility make it suitable for an extensive array of applications across creative and commercial domains:

- Professional content creation and social media: Generate engaging short-form videos, reels, and stories optimized for platforms like Instagram, TikTok, YouTube Shorts, and LinkedIn, with precise control over pacing, visual style, and branded content requirements.

- Commercial advertising and marketing: Produce dynamic, eye-catching video advertisements with specific visual narratives, product demonstrations, and brand storytelling elements, enabling rapid iteration and A/B testing of creative concepts.

- Educational content and training materials: Create animated explainers, instructional videos, and visual learning aids that simplify complex topics, enhance student engagement, and provide consistent educational experiences across diverse learning environments.

- Creative prototyping and pre-visualization: Rapidly prototype video concepts, storyboard sequences, and visual ideas before committing to full-scale production, enabling directors and creative teams to explore artistic possibilities and communicate vision effectively.

- Cinematic and artistic projects: Generate high-quality, stylized video clips with sophisticated control over cinematic elements including lighting design, camera movement, color grading, and compositional aesthetics for independent films, music videos, and art installations.

Pros \& Cons

Like any powerful creative tool, Wan 2.2 offers distinct advantages while presenting certain considerations for potential users:

Advantages

- Open-source accessibility and community development: Freely available under Apache 2.0 license for commercial and personal use, fostering community innovation, custom modifications, and collaborative improvements without licensing restrictions or subscription dependencies.

- Superior visual quality across multiple benchmarks: Delivers exceptional visual fidelity through advanced MoE architecture and extensive training data, consistently outperforming existing open-source alternatives and competing favorably with commercial solutions on standardized evaluation metrics.

- Comprehensive multilingual and multicultural capabilities: Supports both Chinese and English prompt processing with sophisticated visual text rendering, expanding global usability and enabling culturally diverse content creation for international audiences.

- Hardware accessibility and deployment flexibility: Optimized for consumer-grade GPUs with efficient model variants, making advanced AI video generation accessible to individual creators, small studios, and organizations without enterprise-level computational infrastructure.

- Extensive feature ecosystem and technical innovation: Combines multiple video generation modalities, advanced compression techniques, and cinematic control features in a unified platform, supported by continuous research developments and community contributions.

Disadvantages

- Technical setup complexity for local deployment: Requires compatible GPU hardware, proper software environment configuration, and familiarity with AI model deployment processes, potentially challenging for users without technical backgrounds or development experience.

- Prompt engineering learning curve and iteration requirements: Achieving optimal results often requires careful prompt crafting, parameter tuning, and iterative refinement, demanding creative and technical skills that may require significant practice to master effectively.

- Infrastructure and computational demands: While optimized for consumer hardware, generating high-quality videos still requires substantial computational resources, longer processing times compared to traditional editing, and adequate cooling and power supply considerations.

How Does It Compare?

When evaluated against the competitive landscape of AI video generation in 2025, Wan 2.2 establishes a unique position through its combination of open-source accessibility, technical sophistication, and practical performance capabilities.

OpenAI Sora Turbo (2025): Sora Turbo, officially launched in December 2024, represents OpenAI’s flagship video generation platform with capabilities extending up to 20 seconds at 1080p resolution. Available through sora.com for ChatGPT Plus (\$20/month) and Pro (\$200/month) subscribers, Sora offers exceptional prompt adherence and photorealistic output quality. However, Sora operates as a closed-source commercial service with usage limitations, subscription requirements, and no access to underlying model weights. Wan 2.2 differentiates itself through complete open-source availability, local deployment capabilities, and specialized MoE architecture optimizations, though Sora may maintain advantages in maximum resolution and generation length for certain use cases.

Google Veo 2 and Veo 3 (2025): Google’s Veo models represent state-of-the-art commercial video generation, with Veo 2 offering 8-second 720p generation and Veo 3 providing enhanced cinematic quality with integrated audio synthesis. Available through Google AI Studio and Vertex AI with pricing starting at \$19.99/month, Veo models excel in physics simulation, motion realism, and professional cinematography. Veo 3 particularly stands out for its audio generation capabilities and seamless integration with Google’s creative ecosystem. While Google’s models offer superior cloud-based convenience and audio features, Wan 2.2 provides complete model ownership, unlimited local generation, and extensive customization possibilities that commercial services cannot match.

Runway ML Gen-4 (2025): Runway continues to evolve as a comprehensive creative platform with advanced video generation, editing capabilities, and professional workflow integration. Priced from \$15/month with sophisticated motion control and style transfer features, Runway targets professional creators with enterprise-grade tools and collaborative features. However, Runway operates on credit-based consumption models with usage limitations, while Wan 2.2 offers unlimited generation capacity once deployed locally, making it more cost-effective for high-volume production workflows.

Open-Source Alternatives (2025): The open-source video generation landscape includes models like Mochi 1 by Genmo (10B parameters), LTXVideo by Lightricks, and various community-developed alternatives. While these tools offer valuable capabilities and open access, Wan 2.2’s MoE architecture, extensive training data (65.6% more images and 83.2% more videos than Wan 2.1), and sophisticated compression techniques provide superior performance benchmarks. Wan 2.2’s multilingual capabilities and cinematic control features also distinguish it from most open-source competitors that focus primarily on English-language generation.

Emerging Specialized Models: The 2025 market includes specialized tools like Kling AI for motion realism, Pika Labs for creative effects, and various platform-specific generators. While these tools excel in particular niches, Wan 2.2’s comprehensive feature set, model flexibility, and open-source nature position it as a versatile foundation for diverse video generation needs rather than specialized applications.

Final Thoughts

Wan 2.2 represents a transformative milestone in the democratization of professional-quality AI video generation technology. Its sophisticated MoE architecture, combined with comprehensive open-source availability, creates unprecedented opportunities for creators, educators, businesses, and researchers to access cutting-edge video synthesis capabilities without the constraints of commercial licensing or subscription models. The platform’s ability to generate cinematic-quality content while maintaining accessibility on consumer hardware addresses a critical gap between experimental AI research and practical creative tools.

The technical innovations embodied in Wan 2.2, from advanced compression algorithms to multilingual text rendering, demonstrate the potential for open-source development to drive meaningful advancement in generative AI. While the platform requires greater technical engagement than fully managed commercial services, this complexity enables unprecedented customization, privacy control, and creative freedom that proprietary solutions cannot provide.

As the video generation landscape continues evolving rapidly, Wan 2.2’s open-source foundation ensures longevity and community-driven improvement that transcends individual corporate strategies. For organizations and creators seeking to build sustainable, customizable video generation workflows without ongoing licensing dependencies, Wan 2.2 offers a compelling path forward that balances technical sophistication with practical accessibility. The platform’s proven performance across multiple benchmarks, combined with its growing community ecosystem, positions it as an essential tool for anyone serious about leveraging AI for professional video creation while maintaining full control over their creative and technical infrastructure.